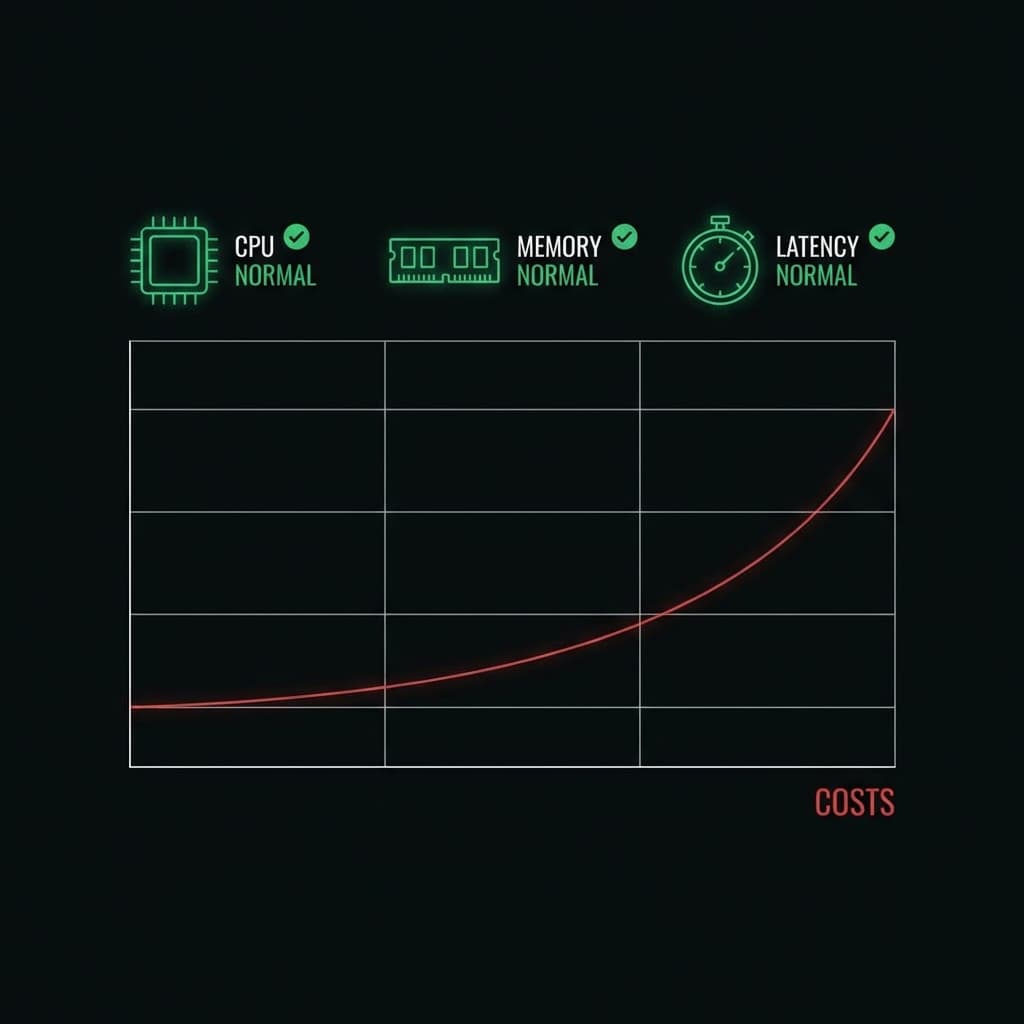

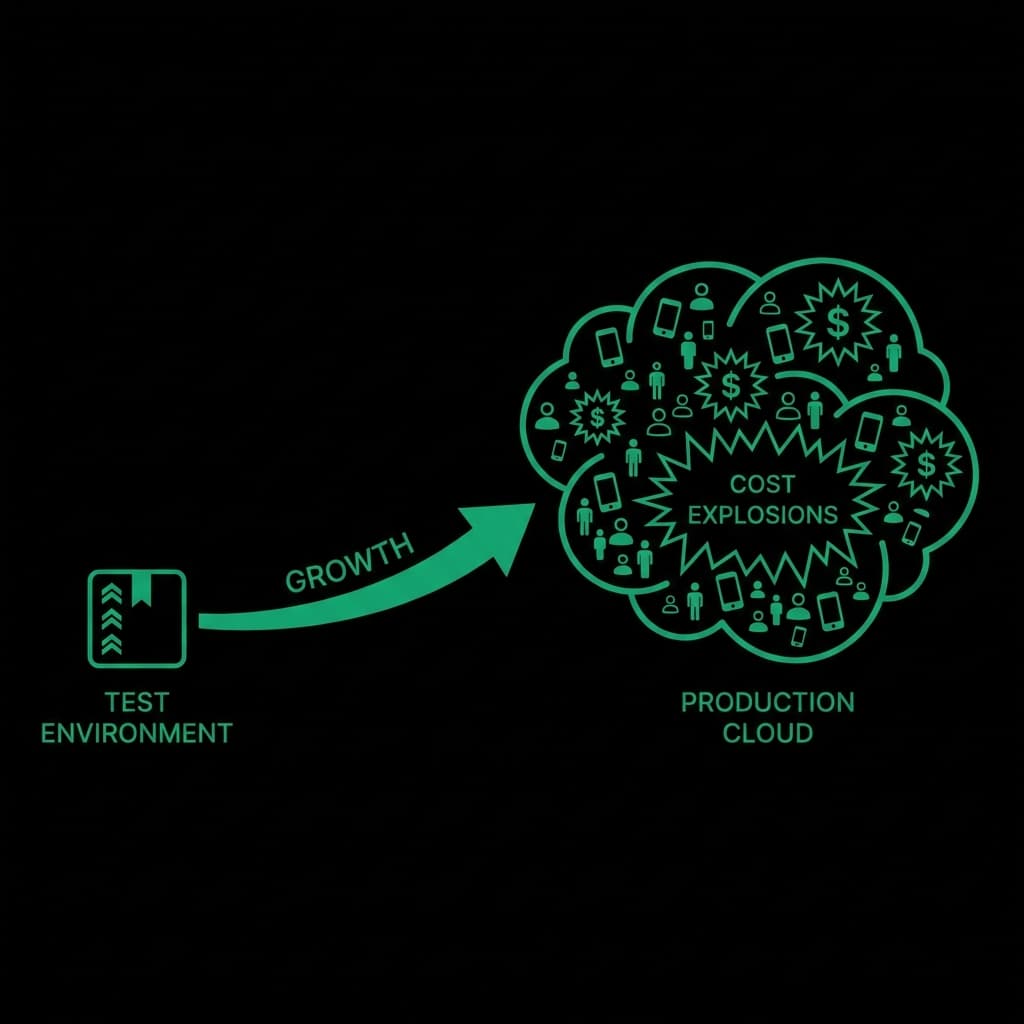

Most LLM cost problems do not appear during development. They appear after deployment.

Production environments introduce scale, unpredictability, and user behavior — all of which directly impact cost.

This article breaks down the most common LLM cost management mistakes and how teams avoid them.

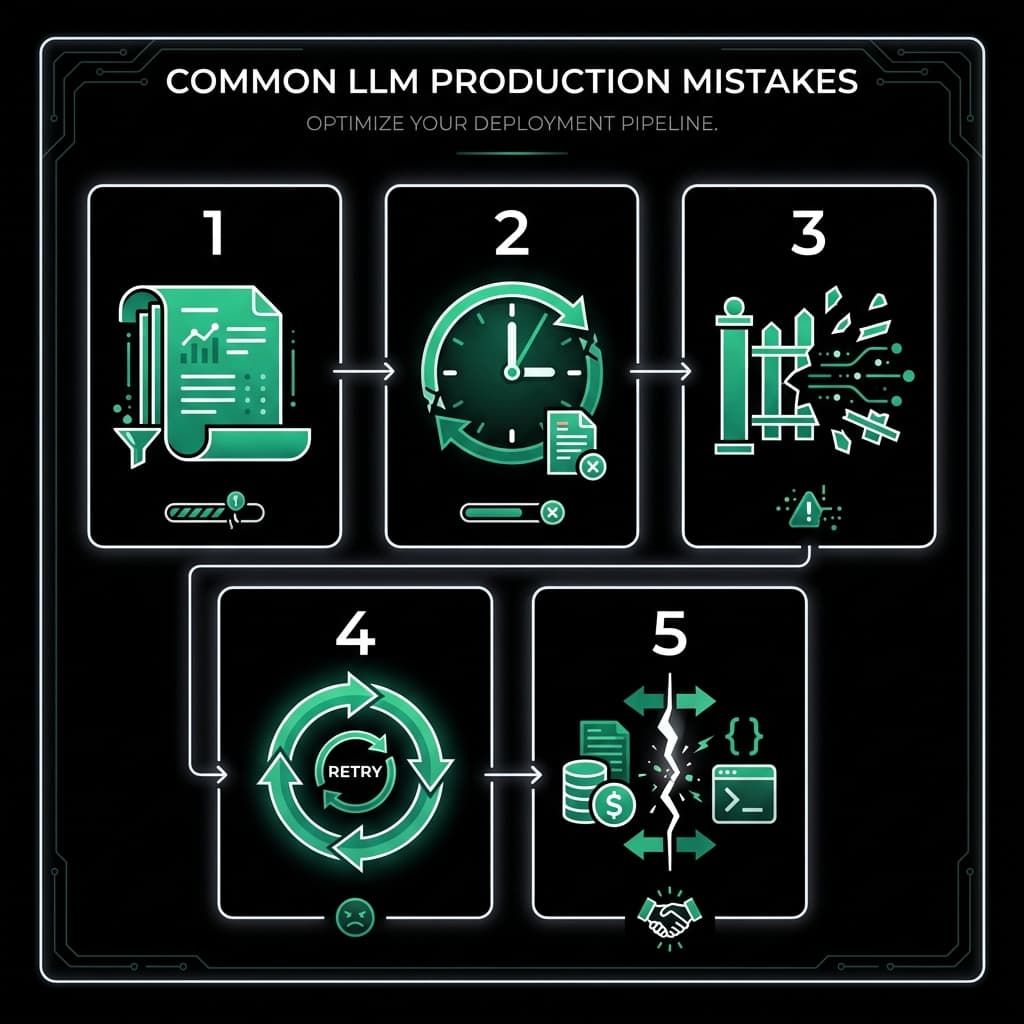

Mistake 1: Assuming Input Size Is Predictable

In production:

- Users paste large content

- Systems forward raw data

- Inputs grow over time

Assuming "average input size" leads to underestimating worst-case cost.

Fix: Always assume maximum possible input and output.

Mistake 2: Relying on Post-usage Reports

Reports show:

- What happened

- How much was spent

They do not stop:

- A single large request

- Sudden spikes

- Abuse scenarios

Fix: Enforce limits at request time, not after billing.

Mistake 3: No Per-request Limits

Many teams set:

- Daily budgets

- Monthly caps

But a single request can consume the entire budget.

Fix: Define a maximum cost per request and block violations.

Mistake 4: Ignoring Retries and Background Jobs

Retries multiply cost silently.

Common causes:

- Network failures

- Timeout retries

- Background jobs without limits

Fix: Apply the same policies to all execution paths.

Mistake 5: Treating Cost as a Finance Problem

Cost control is often delegated to finance.

In reality:

- Cost is generated by code

- Control must exist in the request lifecycle

Fix: Treat cost as a runtime concern, not a billing concern.

Conclusion

LLM cost management failures are architectural, not accidental.

Teams that succeed design cost control into the system itself.