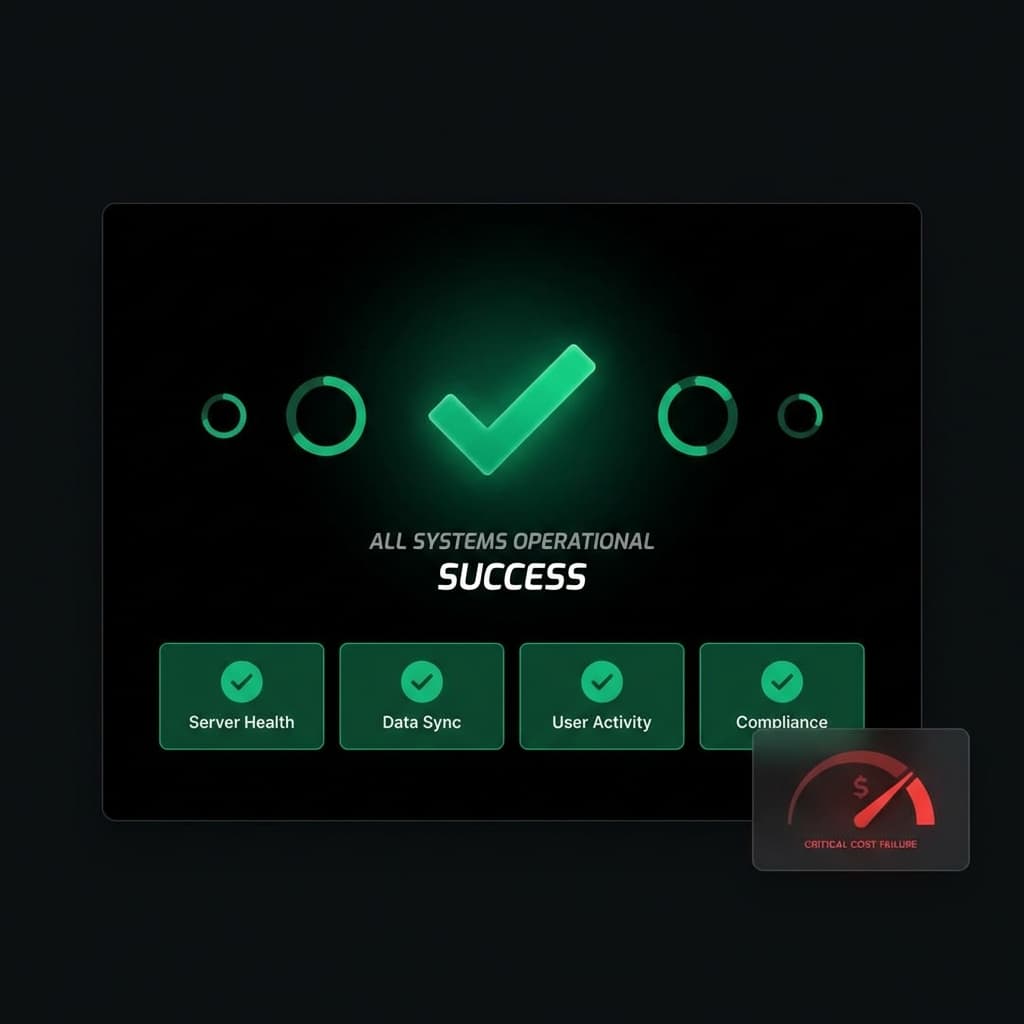

One of the most dangerous phrases in AI-powered production systems is: "Everything looks fine."

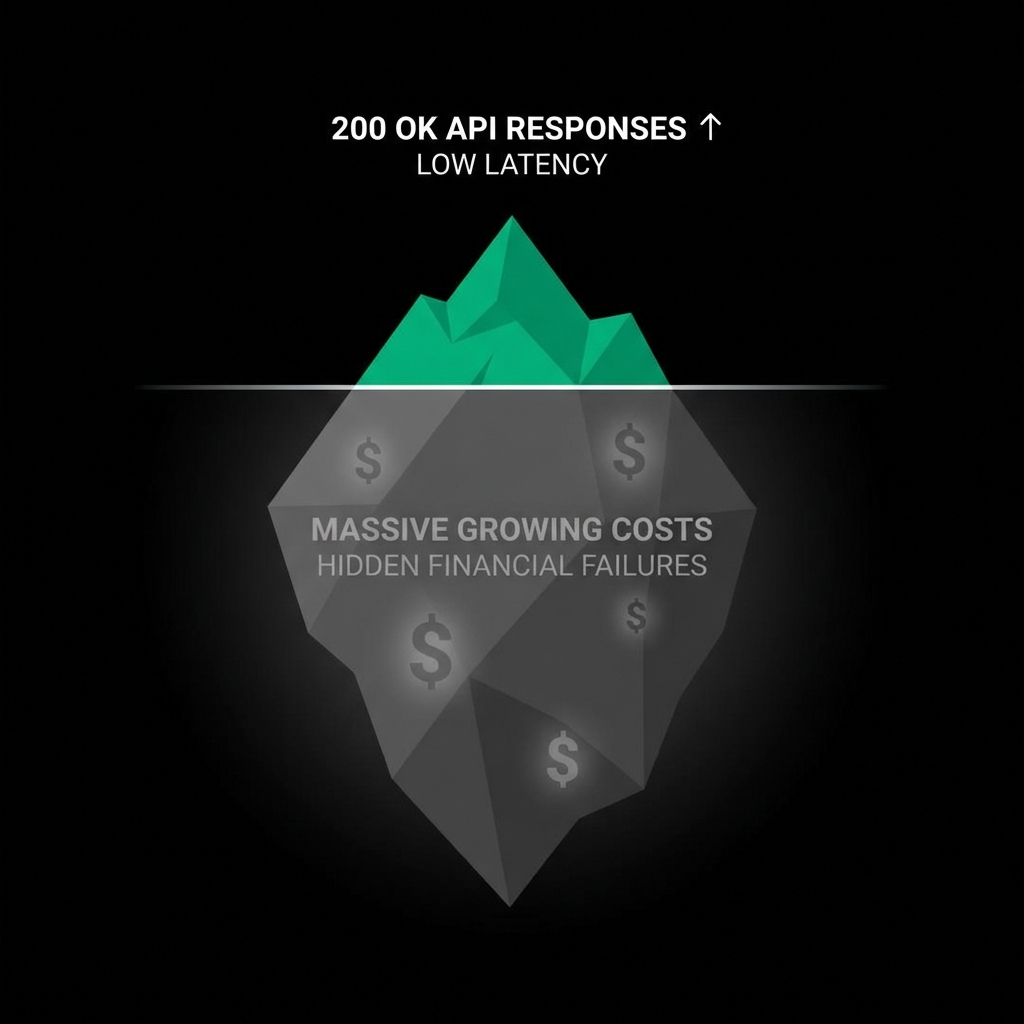

Requests are returning 200s. Latency is stable. Users are getting responses. From an engineering perspective, the system is healthy. From a cost perspective, it might be completely out of control.

Financially Successful Requests

LLM APIs introduce a new kind of failure mode: financially successful requests.

A request can be technically correct and still catastrophically expensive. Large prompts, verbose responses, and inefficient usage patterns all generate valid outputs while silently increasing cost.

This creates a blind spot for teams used to traditional metrics. We're trained to look for errors. We're trained to respond to failures. AI cost issues rarely look like failures.

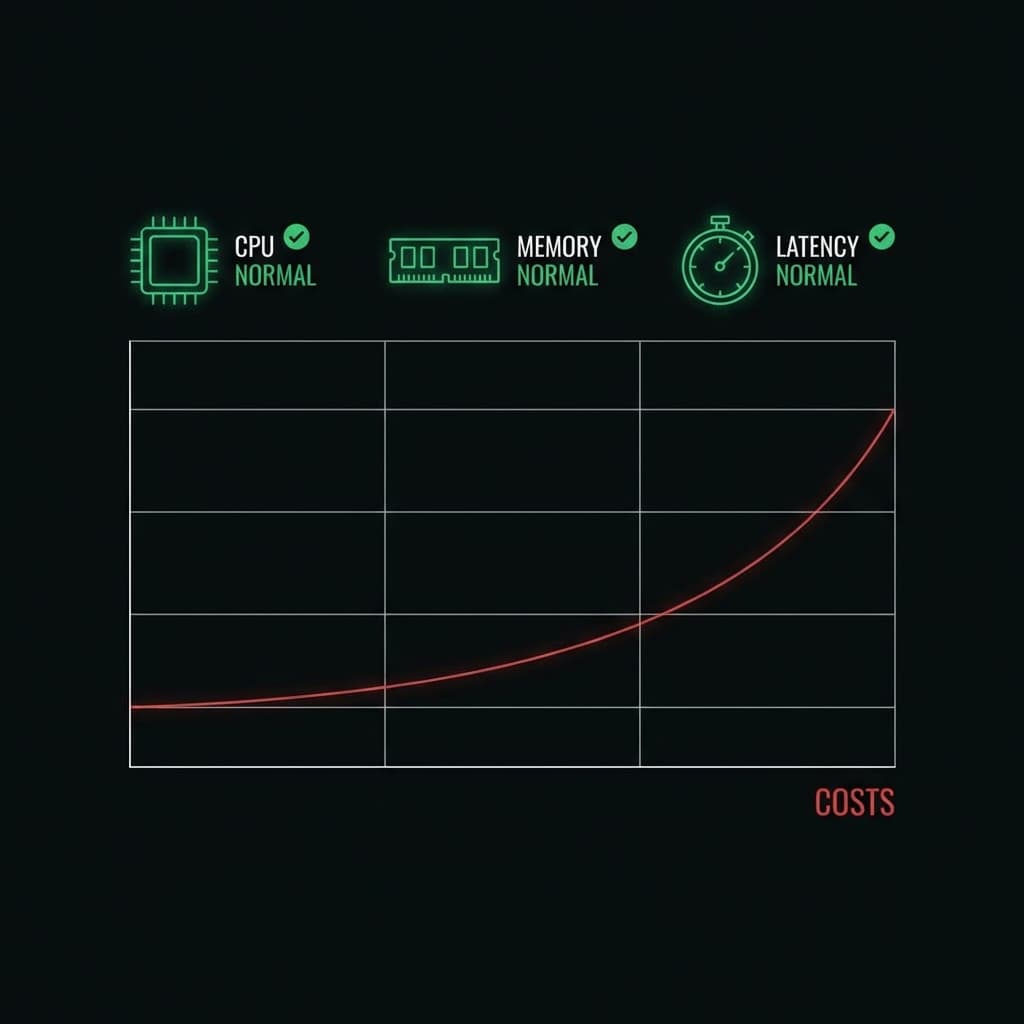

Death by a Thousand Small Inefficiencies

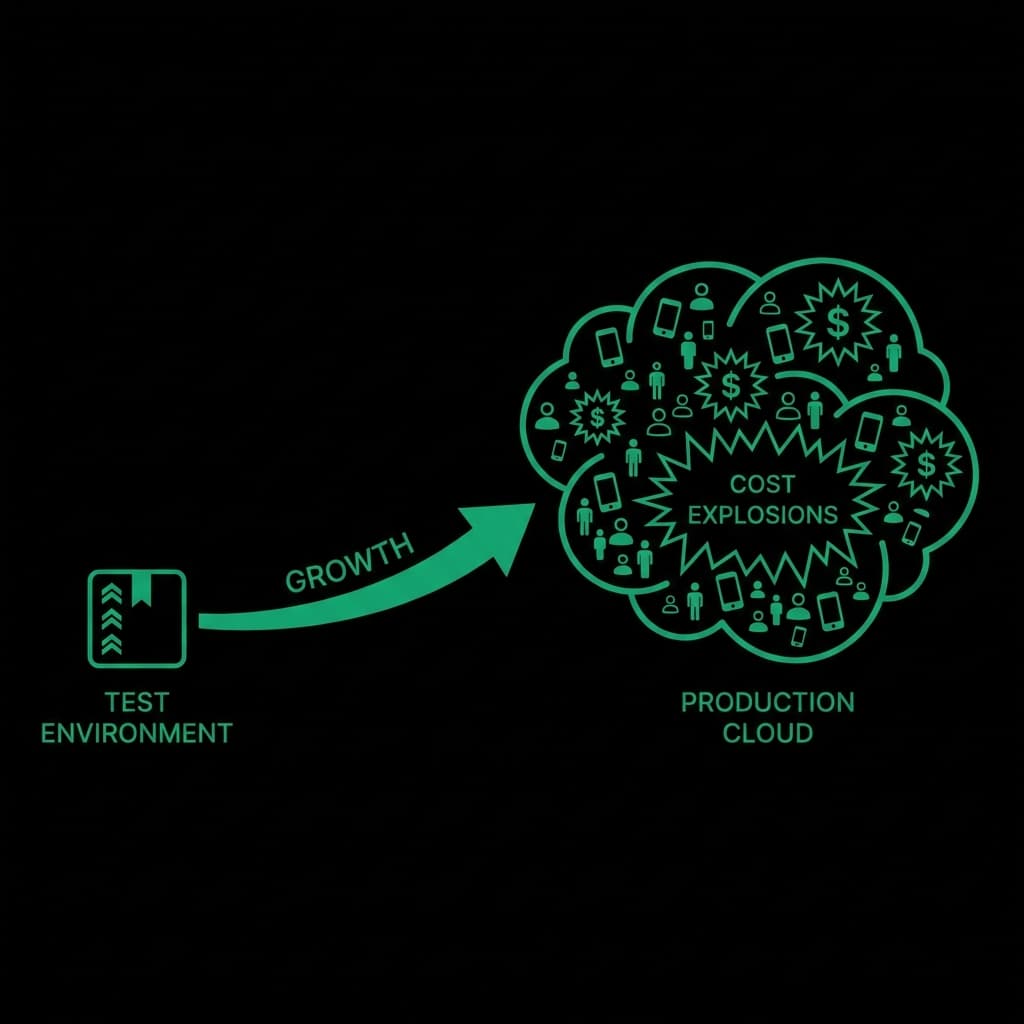

In many systems, cost grows through small inefficiencies rather than obvious mistakes. A prompt template slowly expands over time. An internal tool is reused in ways it wasn't designed for. A new feature relies on LLM calls more heavily than anticipated.

Because requests succeed, no alarms are triggered. The system continues operating normally while the invoice grows.

The Attribution Problem

Another problem is attribution. When costs spike, it's often unclear why. Which endpoint caused it? Which user? Which feature? Provider dashboards usually aggregate usage at too high a level to answer these questions quickly.

This lack of granularity delays response. By the time teams identify the root cause, the damage is already done.

Redefining Success

The most effective teams redefine what "success" means for an AI request. Success isn't just "did it return a response?" It's "did it return a response within acceptable cost boundaries?"

This mindset shift changes how systems are designed. Requests are evaluated before execution. Expensive requests can be blocked, downgraded, or routed differently. Abuse patterns can be detected even without inspecting content.

This approach doesn't slow development. It actually speeds it up. Engineers can iterate freely because they know there are guardrails preventing catastrophic outcomes.

In AI systems, silence is not safety. Visibility without control is not protection. The only reliable defense is enforcing expectations before cost is incurred.